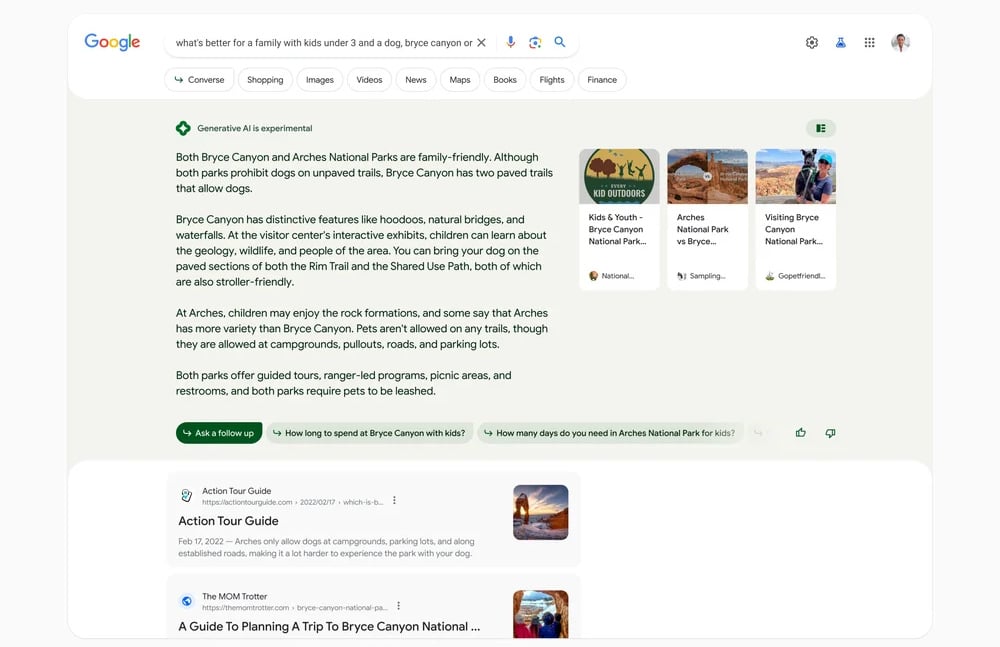

If you’re in the US, you might see a new shaded section at the top of your Google Search results with a summary answering your inquiry, along with links for more information. That section, generated by Google’s generative AI technology, used to appear only if you’ve opted into the Search Generative Experience(SGE) in the Search Labs platform. Now, according to Search Engine Land, Google has started adding the experience on a “subset of queries, on a small percentage of search traffic in the US.” And that is why you could be getting Google’s experimental AI-generated section even if you haven’t switched it on.

another win for the startpage gang

Technically if you submit a query to the search engine, you do so because you want answer to a question in the best way possible without having to do too much digging.

So does it matter if it uses AI to help you? I say its a great feature.

I’m searching to get specific information, and good information. I’ve seen LLMs make shit up and be wrong enough times for me not to trust them. I’d rather turn that feature off.

No. A lot of times I’m looking to compare many answers. I’ll give you an example.

If I want to look for interesting barbecue rubs that I haven’t tried before I’ll query a search engine. Historically (not so much recently) Google has been better at searching through forums than a direct forum search. So I can check many different sources for the ratios people are using and make my decision.

Google’s half baked AI is really terrible right now. It has a memory of about two answers, barely understands context, and hallucinates more often than both copilot and ChatGPT.

Now I’m looking for a coffee rub and it’s giving me injection advice (happened when I tested Gemini), it gets barbecue styles mixed up, doesn’t follow dietary restrictions that are explicitly stated, and will give you recipes for the wrong cut and type of meat.

It’s not ready, and anyone trusting it for an answer to a question is going to have a bad time. If you have to verify it by checking a bunch of links anyway then it’s not only worthless, it’s making search take longer and take up screen real estate.

Wooooo what?

Brisket coffee rub is fairly common. I only know one guy who uses a coffee injection because it’s not common, although other injections are pretty common for brisket.

I have no idea why it went off on that particular tangent. I guess whatever barbecue data it was trained on had a lot of injection advice along with the coffee rubs.

We’re in the technology sub. People here are old enough to know how to Google (old forums, preferably Reddit, as Lemmy is absent), they don’t know how to use an AI effectively (just look at how they’re trying to justify that). Don’t worry about the downvotes and their nonsense responses. Those are the same people who microwave their water instead of using an electric kettle.

I actually get a kick out of downvote score

As on all social media people here (group)think that they are the smartest. But Lemmy is also a bubble, one with people who don’t want to innovate or experience new things. Very weird for something so tech focused.